Simultaneous Location and Mapping (SLAM) technology is at the heart of many of the most recent advances in autonomous robotic capabilities.

Well before the earliest robots were constructed, engineers, manufacturers, scientists, science fiction enthusiasts, and the public at large had envisioned them as humanoid machines capable of navigating the world much the way humans do. However, a robot that can scan its environment, map that scan to a pre-programmed conception of its location, and then continually monitor and update its internal understanding of its location as it moves through the world in real time is (understandably) much harder to achieve than it is to envision.

Several solutions to this problem, known as Simultaneous Location and Mapping (SLAM) systems, exist today, which are what allow autonomous robots to move about a factory floor without colliding with objects or other people. While known challenges have existed for many years, modern refinements are not only improving the technology, but making new tasks possible. In order to appreciate how new capabilities are changing the field, it helps to understand how this technology works and why longstanding limitations are quickly becoming problems of the past.

Challenges of SLAM Technology and Current Advances

One of the most reliable early SLAM technologies was LiDAR (Light Detection and Ranging), which sends out pulses of light to measure variable distances in a way that is similar to sonar. SLAM systems that rely on LiDAR use this input to create a topographical point cloud of the surrounding environment. While this works well to help robots navigate without bumping into things, it has limitations. For instance, LiDAR can’t “read” a sign, recognize an object or detect what its dimensions might be beyond the sides that are facing the sensor.

By contrast, visioning systems equipped with multiple cameras can not only measure depth, they can also be trained using machine learning algorithms to interpret what they’re “seeing” in order to make informed decisions. For instance, they can be trained to identify a person walking and predict their movements, or they can learn to recognize the shape of an object to estimate what its dimensions might be. They can also spot fiducial markers, which can be used to train them to learn the layout of a factory floor.

The biggest downside of visioning systems in the past has been the processing time it takes for them to run these calculations and make corresponding decisions. As processing times have decreased, this limitation is no longer as much of a concern.

Another challenge of many SLAM systems is what is known as a closed loop problem. If a robot scanning its environment makes an error, that mapping error can compound to the extent that the robot becomes lost. However, by combining technologies, robots can learn to rely on both landmarks and grid maps to create a more accurate picture of where they are in a landscape.

Applications for SLAM Technology

With machine visioning technology growing more capable of handling real-world environments, mobile robots are becoming a more viable option in a number of common situations. These include:

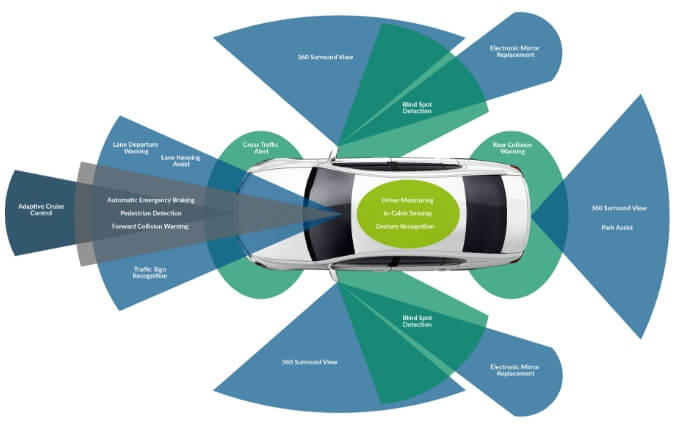

- Autonomous vehicles. These days, LiDAR is a common feature in many automobiles, assisting with collision avoidance software. If you’ve ever had your car beep to alert you of another vehicle in your blind spot, or if you’ve ever used an assisted cruise control that slows down to adjust for cars ahead, then you have experienced this feature for yourself. Machine visioning technology takes this to the next level, by identifying road markings, landmarks, and road signs, which can then be interpreted by piloting software to provide directions for the vehicle.

- Factory navigation for robots. Perhaps the most widespread application of SLAM technology currently in use today is on the factory floor. Mobile robots are currently being used to stock and retrieve inventory, palletize shipments, assist human workers, and perform automated tasks on parts too large to move through an assembly line. While navigation systems alone can help them move through a work area, the combination of machine visioning with LiDAR increases worker safety while expanding the types of tasks robots can perform.

- Consumer robots. Household robotics are still a new industry, with only a limited range of options for robots that can provide household assistance at affordable prices. New SLAM systems have the potential to expand the capabilities of consumer robots. For instance, a Roomba that can map a floor plan can follow a more efficient cleaning route while also avoiding pets and small children. A lawnmower with machine visioning can be programmed to stay within a property’s boundaries while avoiding flower beds.

- Logistics and last mile delivery. Many of the improvements in parcel delivery over the past few years have come from solutions that help companies leverage economies of scale to distribute packages more efficiently. One of the remaining roadblocks comes at the end of this logistical chain, when a package has to make the trip from a distribution center to a doorstep. Some package carriers are turning to automation as a possible solution, either through mobile robots that can navigate street traffic, or via drones that can fly above it. Either way, SLAM systems will be needed to guide a robot to its destination, to allow it to avoid obstacles, and to help it locate appropriate drop-off points.

Mobile technology is the future of modern industry.

At Eagle, we recognize that, for businesses to stay at the leading edge of their industry, they need access to the latest solutions. We make sure to stay on top of emerging technologies so that we can understand how they can be used to create new automation solutions. Our focus on innovation has helped us develop advanced systems in industries ranging from autonomous vehicles to vertical farming. If you have an automation need, contact us, and we would be happy to discuss solutions with you.

![]() Connect With Eagle Technologies LinkedIn

Connect With Eagle Technologies LinkedIn

Eagle Technologies, headquarters in Bridgman, MI

Eagle builds the machines that automate manufacturing. From high-tech robotics to advanced product testing capabilities, Eagle offers end-to-end manufacturing solutions for every industry.